About the CoMSES Model Library more info

Our mission is to help computational modelers develop, document, and share their computational models in accordance with community standards and good open science and software engineering practices. Model authors can publish their model source code in the Computational Model Library with narrative documentation as well as metadata that supports open science and emerging norms that facilitate software citation, computational reproducibility / frictionless reuse, and interoperability. Model authors can also request private peer review of their computational models. Models that pass peer review receive a DOI once published.

All users of models published in the library must cite model authors when they use and benefit from their code.

Please check out our model publishing tutorial and feel free to contact us if you have any questions or concerns about publishing your model(s) in the Computational Model Library.

We also maintain a curated database of over 7500 publications of agent-based and individual based models with detailed metadata on availability of code and bibliometric information on the landscape of ABM/IBM publications that we welcome you to explore.

Displaying 10 of 1000 results for "J Van Der Beek" clear search

06b EiLab_Model_I_V5.00 NL

Garvin Boyle | Published Saturday, October 05, 2019EiLab - Model I - is a capital exchange model. That is a type of economic model used to study the dynamics of modern money which, strangely, is very similar to the dynamics of energetic systems. It is a variation on the BDY models first described in the paper by Dragulescu and Yakovenko, published in 2000, entitled “Statistical Mechanics of Money”. This model demonstrates the ability of capital exchange models to produce a distribution of wealth that does not have a preponderance of poor agents and a small number of exceedingly wealthy agents.

This is a re-implementation of a model first built in the C++ application called Entropic Index Laboratory, or EiLab. The first eight models in that application were labeled A through H, and are the BDY models. The BDY models all have a single constraint - a limit on how poor agents can be. That is to say that the wealth distribution is bounded on the left. This ninth model is a variation on the BDY models that has an added constraint that limits how wealthy an agent can be? It is bounded on both the left and right.

EiLab demonstrates the inevitable role of entropy in such capital exchange models, and can be used to examine the connections between changing entropy and changes in wealth distributions at a very minute level.

…

University Connectivity

Rachel Wozniak Nick Glover Chris English Juan Cisneros | Published Tuesday, November 30, 2021This model is designed to show the effects of personality types and student organizations have on ones chance to making friendships in a university setting. As known from psychology studies, those that are extroverted have an easier chance making friendships in comparison to those that are introverted.

Once every tick a pair of students (nodes) will be randomly selected they will then have the chance to either be come friends or not (create an edge or not) based on their personality type (you are able to change what the effect of each personality is) and whether or not they are in the same club (you can change this value) then the model triggers the next tick cycle to begin.

Evolutionary Prosocial Behavior Algorithm 1.1 (EPBA_1.1)

Andrea Ceschi | Published Tuesday, September 04, 2018In order to test how prosocial strategies (compassionate altruism vs. reciprocity) grow over time, we developed an evolutionary simulation model where artificial agents are equipped with different emotionally-based drivers that vary in strength. Evolutionary algorithms mimic the evolutionary selection process by letting the chances of agents conceiving offspring depend on their fitness. Equipping the agents with heritable prosocial strategies allows for a selection of those strategies that result in the highest fitness. Since some prosocial attributes may be more successful than others, an initially heterogeneous population can specialize towards altruism or reciprocity. The success of particular prosocial strategies is also expected to depend on the cultural norms and environmental conditions the agents live in.

Peer reviewed MGA - Minimal Genetic Algorithm

Cosimo Leuci | Published Tuesday, September 03, 2019 | Last modified Thursday, January 30, 2020Genetic algorithms try to solve a computational problem following some principles of organic evolution. This model has educational purposes; it can give us an answer to the simple arithmetic problem on how to find the highest natural number composed by a given number of digits. We approach the task using a genetic algorithm, where the candidate solutions to the problem are represented by agents, that in logo programming environment are usually known as “turtles”.

On July 20th, James Holmes committed a mass shooting in a midnight showing of The Dark Knight Rises. The Aurora Colorado shooting was used as a test case to validate this framework for modeling mass shootings.

Mobility, Resource Harvesting and Robustness of Social-Ecological Systems

Irene Perez Ibarra | Published Monday, September 24, 2012 | Last modified Saturday, April 27, 2013The model is a stylized representation of a social-ecological system of agents moving and harvesting a renewable resource. The purpose is to analyze how mobility affects sustainability. Experiments changing agents’ mobility, landscape and information governments have can be run.

Hyperconnectivity, and Fact-Checking- Modeling Witnessing as a Traditional Coast Salish Mechanism

Adam Rorabaugh | Published Thursday, May 01, 2025An unintended consequence of low cost maritime travel may be hyperconnectedness, creating social situations where information can be readily passed before it is verified- an issue not limited to modern digitally connected societies. In traditional Coast Salish societies, the peoples of what is now Western Washington and Southwestern British Columbia, oral traditions were vertified through a process called witnessing. Witnesses would be trained to recount and verify oral history and traditional teachings at high fidelity. Here, a simple model based on dual inheritance approaches to genes and culture, is used to compare this specific form of verifying socially important information compared to modern mass communication. The model suggests that witnessing is a high fidelity form of transmitting knowledge with a low error rate, more in line with modern apprenticeships than mass communication. Social mechanisms such as witnessing provide solutions to issues faced in contemporary discourse where the validity of information and even fact checking mechanisms may be biased or counterfactual. This effort also demonstrates the utillity of using modeling approaches to highlight how specific, historically contingent institutions such as witnesses can be drawn upon to model potential solutions to contemporary issues solved in the past in traditional Coast Salish practice.

Replica of Turchin's (2003) Metaethnic Frontier model

Paul Smaldino | Published Sunday, February 15, 2026In his 2003 book, Historical Dynamics (ch. 4), Turchin describes and briefly analyzes a spatial ABM of his metaethnic frontier theory, which is essentially a formalization of a theory by Ibn Khaldun in the 14th century. In the model, polities compete with neighboring polities and can absorb them into an empire. Groups possess “asabiya”, a measure of social solidarity and a sense of shared purpose. Regions that share borders with other groups will have increased asabiya do to salient us vs. them competition. High asabiya enhances the ability to grow, work together, and hence wage war on neighboring groups and assimilate them into an empire. The larger the frontier, the higher the empire’s asabiya.

As an empire expands, (1) increased access to resources drives further growth; (2) internal conflict decreases asabiya among those who live far from the frontier; and (3) expanded size of the frontier decreases ability to wage war along all frontiers. When an empire’s asabiya decreases too much, it collapses. Another group with more compelling asabiya eventually helps establish a new empire.

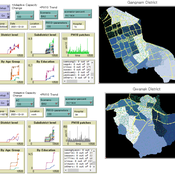

An Agent-based Assessment of Health Vulnerability to Long-term particulate exposure in Seoul Districts

Hyesop Shin Mike Bithell | Published Monday, November 05, 2018 | Last modified Monday, December 03, 2018This model aims to understand the cumulative effects on the population’s vulnerability as represented by exposure to PM10 (particulate matter with diameter less than 10 micrometres) by different age and educational groups in two Seoul districts, Gangnam and Gwanak. Using this model, readers can explore individual’s daily commuting routine, and its health loss when the PM10 concentration of the current patch breaches the national limit of 100µg/m3.

Confirmation Bias improves Performance in a Signal Detection Task and evolves in an Evolutionary Algorithm

Michael Vogrin | Published Monday, May 08, 2023Confirmation Bias is usually seen as a flaw of the human mind. However, in some tasks, it may also increase performance. Here, agents are confronted with a number of binary Signals (A, or B). They have a base detection rate, e.g. 50%, and after they detected one signal, they get biased towards this type of signal. This means, that they observe that kind of signal a bit better, and the other signal a bit worse. This is moderated by a variable called “bias_effect”, e.g. 10%. So an agent who detects A first, gets biased towards A and then improves its chance to detect A-signals by 10%. Thus, this agent detects A-Signals with the probability of 50%+10% = 60% and detects B-Signals with the probability of 50%-10% = 40%.

Given such a framework, agents that have the ability to be biased have better results in most of the scenarios.

Displaying 10 of 1000 results for "J Van Der Beek" clear search